KEEP IN TOUCH

Subscribe to our mailing list to get free tips on Data Protection and Cybersecurity updates weekly!

On June 9th, 2020, IBM Cloud data centers suffered a global outage that caused connectivity issues for many of the web sites and platforms utilizing the service, including BleepingComputer.

For BleepingComputer, the outage started at approximately 6:05 PM EST, and services were restored around 9:00 PM.

Lawrence Abrams, the owner of BleepingComputer, who saw the site go down minutes after posting a new story, said the most infuriating part was that there was no trouble report from IBM, and their status report did not show any issue.

It wasn’t until 8:26 PM EST that IBM Cloud’s Twitter account finally posted an update with little information.

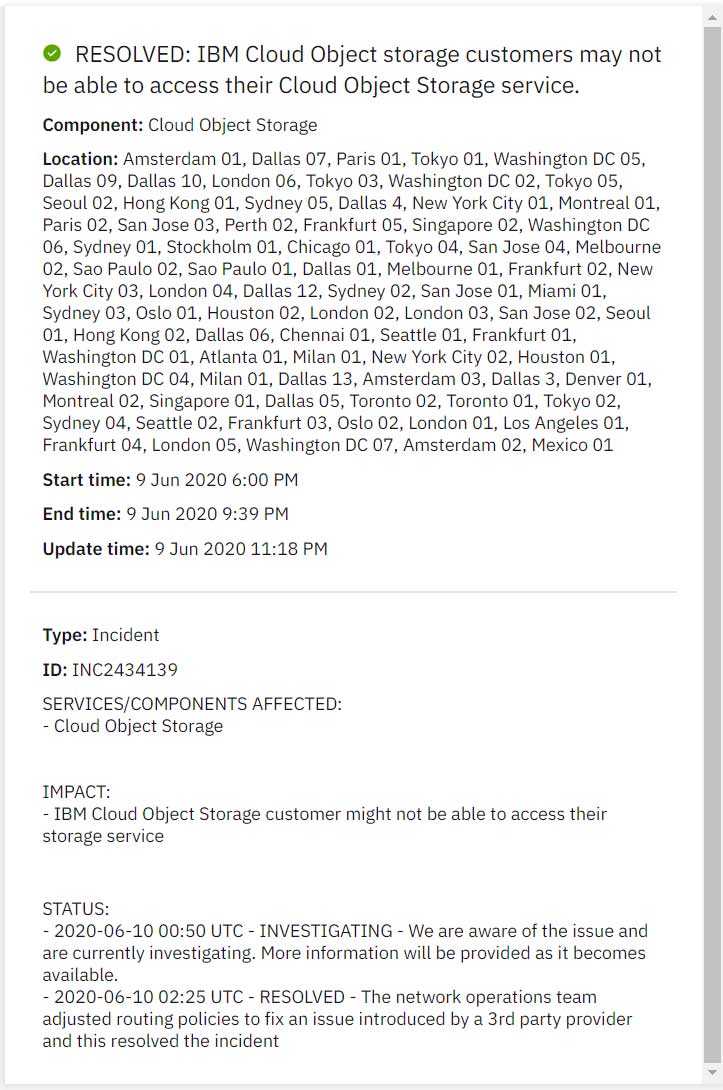

The next day, IBM updated an announcement previously made during the outage stating that an external network provider caused the issue.

“IBM is focused on external network provider issues as the cause of the disruption of IBM Cloud services on Tuesday, June 9. All services have been restored.”

The announcement continued, “A detailed root cause analysis is underway. An investigation shows an external network provider flooded the IBM Cloud network with incorrect routing, resulting in severe congestion of traffic and impacting IBM Cloud services and our data centers. Mitigation steps have been taken to prevent a [recurrence]. Root cause analysis has not identified any data loss or cybersecurity issues.”

As can be seen from the screenshot above, the impact was large-scale.

Cloud data centers across major cities around the world were knocked offline, prompting experts to question whether this was indeed a simple routing mistake or an intentional BGP hijacking attack.

Multiple users on Twitter demanded a further explanation from the company and a postmortem report of the incident.

For the Internet to work, different devices (autonomous systems) advertise the IP prefixes they manage and the traffic they route. However, this is largely a trust-based system with the assumption that every device is telling the truth.

Given the massive interconnected nature of the Internet, it is hard to enforce honesty on every single device present on the network.

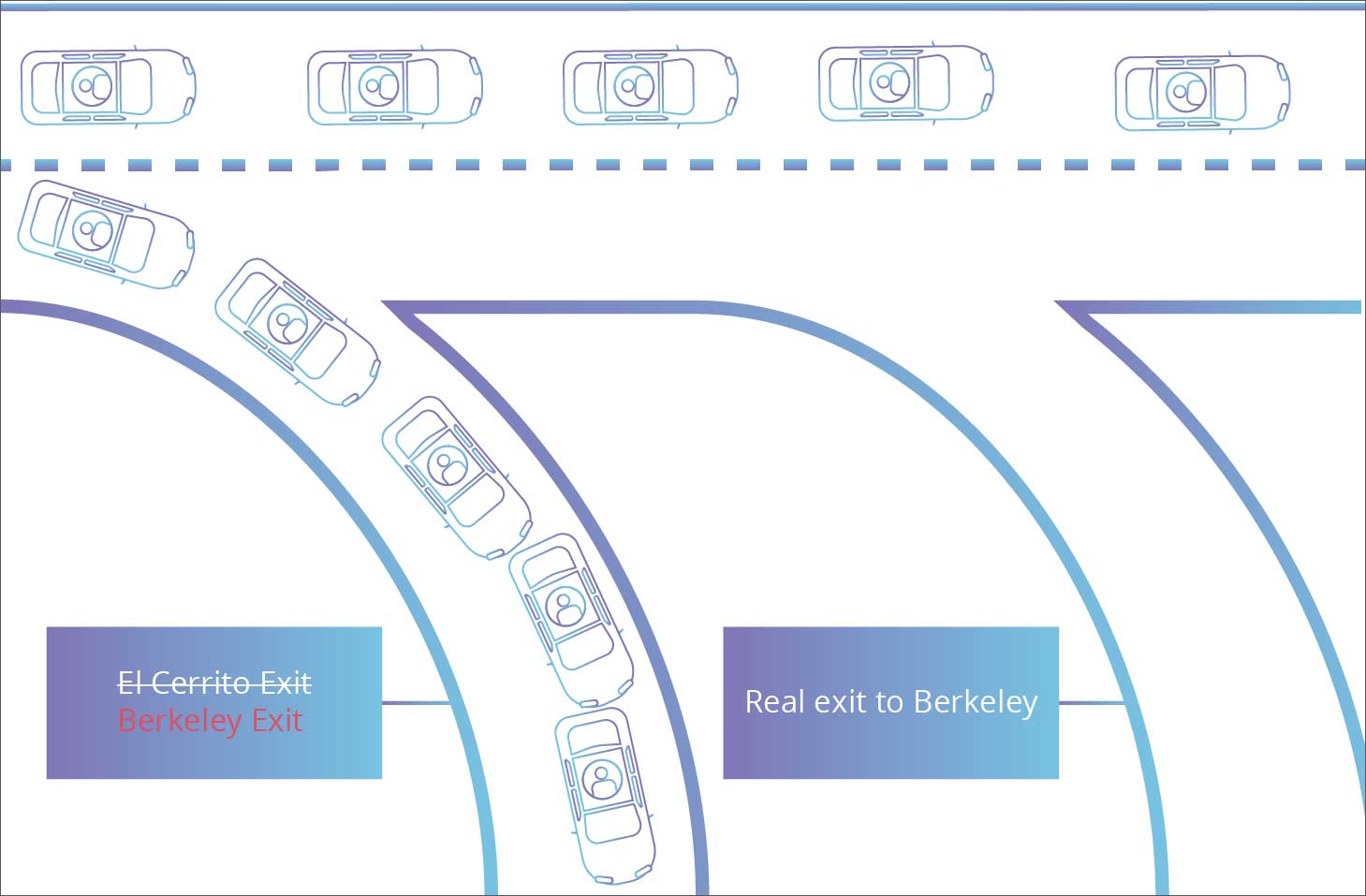

In simple words, when you want to connect to Bleeping Computer’s servers, a series of intermediary routers will route your request to connect to the right destination. These intermediary devices direct each other as to where to send traffic packets to and from, similar to real-world post-office systems, or highway signs.

In the world of computing, this is facilitated between Autonomous Systems by the Border Gateway Protocol (BGP).

Also read: 7 Key Principles of Privacy by Design that Businesses should adopt

Route hijacking occurs when a malicious entity manages to “falsely advertise” to other routers that they own a specific set of IP addresses when they don’t. When this happens, chaos occurs.

For example, if your router wanted to connect to Bleeping Computer and gets flooded with multiple “routes” to pick from, which one does it choose? Moreover, what happens when one or more of those routes lead you not to Bleeping Computer but an attacker’s system impersonating them?

This route confusion would create a lot of trouble on the Internet and lead to delays, traffic congestion, or total outages.

An excellent analogy presented by Cloudflare demonstrates how BGP routing is analogous to an adversary changing road signs, redirecting traffic under the pretense of leading them to their intended destination.

The page reads, “Roughly speaking, if DNS is the Internet’s address book, then BGP is the Internet’s road map.”

Because routing and BGP is a “trust based” system, all of the routing devices on the chain (much like signboards on a highway) are expected to and must be telling the truth. Even if one compromised device on the chain “lies,” connections will suffer, outages will occur, and Internet traffic will get lost.

We last saw a significant case of BGP hijacking in 2008, when YouTube had gone offline for its global audience due to some of its traffic getting redirected through Pakistani servers. Over the next few years, we have reported similar incidents.

In the case of IBM Cloud, another company named Catchpoint acknowledged seeing network routing issues on outbound traffic transiting through their traceroute monitoring systems located within IBM’s cloud.

“We have verified that there were network routing issues on egress traffic thru our active traceroute monitors situated within IBM’s cloud. To further diagnose, our BGP experts are looking at the full table data. Here’s a blog showing how we saw the outage,” Catchpoint stated.

At this time, all services have been restored by IBM, and an investigation is in progress as to how an outage of this scale had occurred.

Also read: Things to Know about the Spam Control Act (Singapore)